Quarterly insights: Cybersecurity

Not a micro segment: Microsegmentation’s ability to protect the cloud is big opportunity

As bad actors have learned to take advantage of the freedom inside a protected environment and internal threats have become better understood, cybersecurity spend has rapidly expanded from protecting internal assets from outsiders to better controlling lateral data flows within protected environments.

In a world where lateral data flows for a business process can now span on-premise infrastructure, a company’s own data center, third-party data centers such as AWS, Azure, and Google Cloud, and hosted cloud applications from third parties, microsegmentation is a key solution in the arsenal to protect business assets. Microsegmentation divides data center and other computing assets, regardless of physical location, into logical groupings, sometime down to very basic component and workload levels, and then creates and enforces policies for each segment.

While microsegmentation can be highly effective and has great promise, its relatively early stage of evolution combined with its complexity mean there is a long runway for the market to grow as innovative cybersecurity companies invest to introduce better solutions. We highlight just a few of the companies providing some of today’s leading solutions.

TABLE OF CONTENTS

Includes discussion of PANW, VMW, ZS and three private companies

- Proliferation of lateral data flows shifts the nexus of vulnerability

- Microsegmentation is key to protecting today’s expansive computing environment

- Enormous complexity requires automation

- Common implementation challenges

- Tradeoffs among microsegmentation solution types

- Some leading microsegmentation players

- See heavy investment, new products and M&A ahead

- Cybersecurity index continues to soar, pulls further ahead of Nasdaq and S&P

- M&A activity slows in Q1

- Q1 private placements on track to rise from Q4 low

Proliferation of lateral data flows shifts the nexus of vulnerability

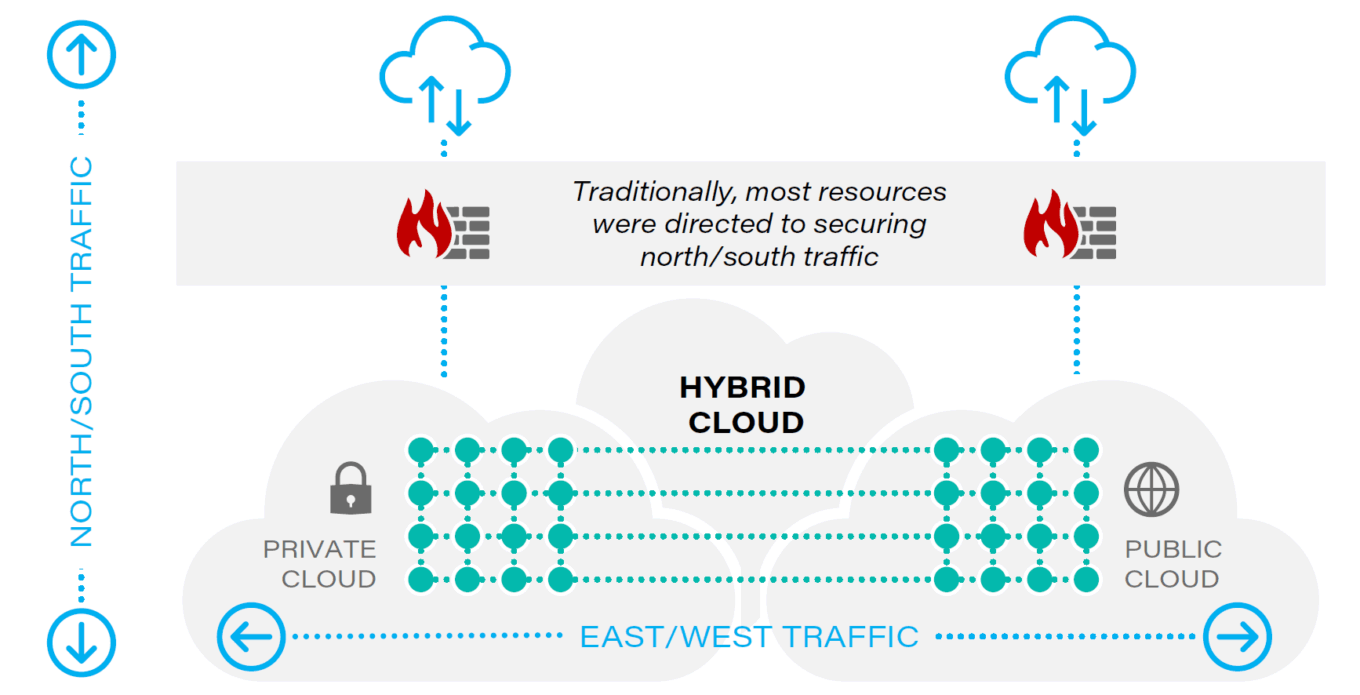

Most historical security spend has focused on preventing unauthorized or inappropriate access from outside a protected environment and keeping sensitive data inside a protected environment from leaving. These data movements are commonly referred to as north-south traffic. East-west traffic, or lateral data flows, refers to flows between points within a protected environment. For example, a person sending account information to a client creates north-south traffic, while an internal accounting system pulling data from an internal payroll database creates east-west traffic.

In the early days of networking, east-west traffic received much less scrutiny than north-south traffic. However, as internal threats became more appreciated and bad actors learned to take advantage of the freedom they found once they had penetrated a protected environment, security postures changed to pay much more attention to east-west traffic.

Fast forward to today’s networking environment where data is located in a combination of on-premise infrastructure, a company’s own data center, third-party data centers such as AWS, Azure, and Google Cloud, and hosted cloud applications from third parties. A single application or workflow may use and pull data from all of these.

For example, a cloud based payroll system may pull hours worked by employees from systems hosted in a third party data center and from on-premise databases. In this type of data environment, enterprises cannot rely on north-south focused network-based firewall architectures because lateral data flows are just as critical and trying to firewall every segment based on IP address mapping is difficult and largely ineffective at preventing attacks.

TABLE 1: Changing environment: lateral data flows

Source: First Analysis.

In this world of increasingly lateral data flows, the most complicated and difficult to protect systems are those that exist in a hybrid cloud environment where application code, databases, web servers and other resources needed for an application or process are virtualized across multiple clouds. Protecting these systems is even more challenging if they include dynamic elements with workflows that are created and ended within seconds.

Microsegmentation is key to protecting today’s expansive computing environment

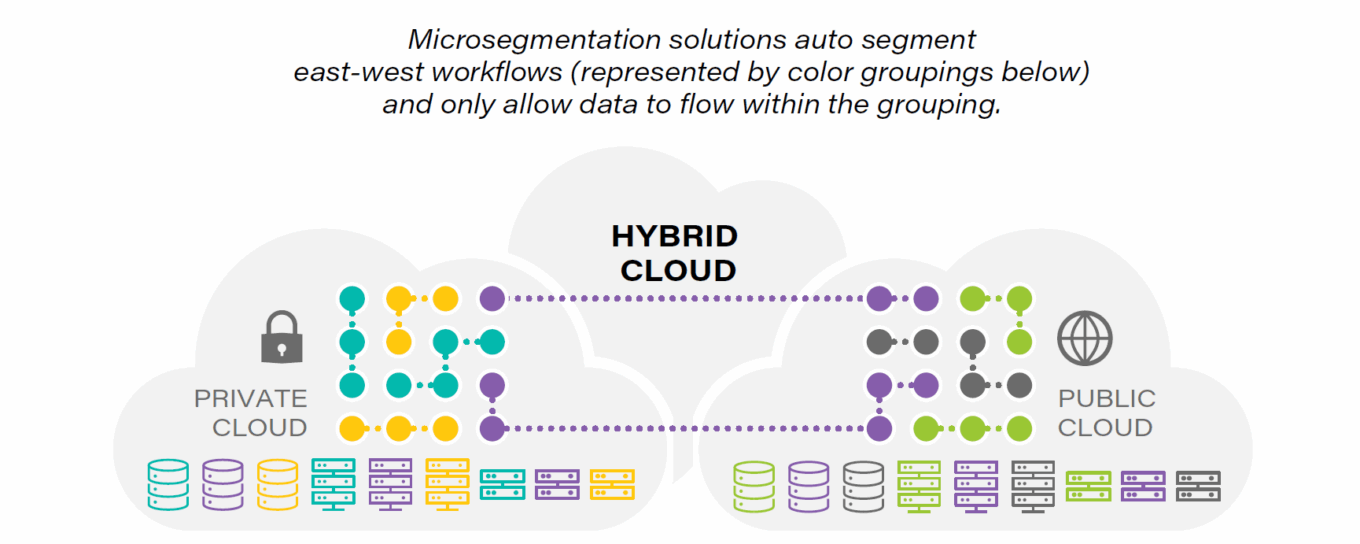

To help address this issue, companies are implementing microsegmentation. Microsegmentation is the process of subdividing data center and other computing assets into logical groupings, sometime down to very basic component and workload levels, and then creating and enforcing policies for each segment. This segmentation can be done in various ways, each having benefits and drawbacks.

Two common approaches in the cloud are segmentation based on application and identity. Application-based microsegmentation looks at an application’s workflow, groups all the resources necessary to run that application (such as the user interface, the application logic rules, and the databases the application accesses), and creates and enforces policies that control data flows among these resources.

Identity-based microsegmentation, which we believe has tremendous momentum at the moment, examines each resource and determines which people or what other computing resources should have access to it. Identity-based microsegmentation, when combined with a zero-trust approach (which blocks all access to a resource unless the identity of the requester can be confirmed to a pre-determined level of confidence and the requester is specifically allowed access under the security policies), provides a high level of security and can be used for both north-south and east-west traffic in a single policy design.

TABLE 2: Microsegmentation solution

Source: First Analysis.

Enormous complexity requires automation

Regardless of which microsegmentation type is used, even a medium-sized enterprise will have hundreds of discreet resources with thousands of different paths, each requiring its own policy. Manually writing policies for these relationships is impractical, but the process can be automated in whole or in part. Usually the process involves the following steps.

- Understand and group existing data flows: By observing network traffic flows, microsegmentation software discovers and maps data flows to understand which resources appear to be needed for which workflows. Some solutions assume the existing patterns they observe are the correct baseline and group workflows into microsegments accordingly, while more sophisticated solutions visually present this data so security professionals can drill down to confirm that the baseline microsegmentation does not already reflect policy violations or misconfiguration.

- Create and apply policies: Most solutions either automatically create and apply policies that can be altered by security professionals or suggest policies that professionals can accept, reject or modify. Given the huge quantity of connections that require policies, most systems prioritize creating, applying and presenting policies for review based on automated or manual risk assessments.

- Implement and monitor: Enforcing policies is a high stakes proposition, as any policy that blocks a legitimate path risks causing processes or applications to fail, often with material adverse business consequences. For this reason, many systems offer a test mode that generates alerts based on the policies rather than enforcing them. This provides an opportunity for security teams to identify policies that would otherwise block legitimate workflows. After an enterprise has implemented some initial microsegmentation rules, it must monitor them to ensure legitimate traffic continues to be allowed as its business evolves, using the solution to understand, group, and develop policies for new and changed resources and data flows.

Common implementation challenges

With this complexity come implementation challenges that typically make implementation a measured process.

- A company’s workflows may have been misconfigured or compromised by malware before implementation starts. If so, automated grouping algorithms (which look at existing workflows), will likely allow this inappropriate access to continue. Such problems may be caught if people who understand both the workflows’ business purposes and network architecture review or oversee implementation.

- Some connections between resources may happen so infrequently that they are not captured during the period used to create microsegmentation units. As a result, microsegmentation policies may block these connections, which can be disastrous if the blocked connections are critical. This is one of the key reasons enterprises sometimes forgo tight enforcement and instead use microsegmentation tools for monitoring. Without mapping all appropriate resource connections for every workflow, it’s impossible to guarantee this problem won’t occur, so enterprises must balance the risk of breaking critical workflows against the risk of allowing malicious connections. One solution is to automatically block infrequent connections unless they are granted special limited duration access by a manual or automated process, because these types of connections represent inherent security risk regardless of their criticality to the workflow. To decide which approach to take, enterprises usually consider how critical the workflow is and how sensitive the resource being protected is.

- Microsegmentation policies can hinder newly created legitimate workflows. For example, as an enterprise builds and tests a new application, it may want to access real-world data from production environments. But such access will likely be blocked by microsegmentation policies, which are based on normal production environment workflows. While this is a pain point for developers, allowing such connections could expose the enterprise to malicious code or other risks present in the development environment unless the entire development environment is protected to the same standard as the production environment. Though this is not the primary reason behind the “shift left” movement, which builds security into code at the earliest possible point, “shift left” can mitigate some of this risk.

Tradeoffs among microsegmentation solution types

Microsegmentation solutions take a variety of forms, and no single solution is best for all circumstances. Enterprises will choose the approaches that best fit their specific environments and needs. Many of the most commercially successful solutions are agent based meaning they require small programs that connect to a central management tool be deployed to gather data and enforce policy. Agent-based solutions are flexible and relatively straightforward to implement and manage. However, they generally run on layers 3 and 4 in the network stack, which means they cannot handle application-level (layer 7) policies and may lack visibility into certain asset classes. In addition, these solutions are only effective on the assets where they are deployed.

Since deployment itself helps identify high-risk and high-value assets via autodiscovery tools, a gradual pace of deployment means some high-risk and high-value assets may remain exposed for relatively long periods. Finally, while agents can be small, using relatively little computing power on the devices where they reside, they do consume some resources on those devices. Network-based approaches (sometimes called agentless) avoid these drawbacks but can be more complex and may have difficulty enforcing policies (or enforcing them consistently) in certain environments, such as bare metal implementations where there is no intervening operating system.

Some leading microsegmentation players

We highlight just a few of the companies with leading solutions below.

Guardicore – Our research indicates Tel Aviv-based Guardicore’s Centra platform has momentum in the market, making this company a player to watch in the space. It offers an agent-based approach to microsegmentation that appears to be relatively straightforward and easy to deploy. Founded in 2013, the company has received over $100 million in venture funding and also has offices in Boston and San Francisco.

Illumio – Founded in 2012 and with over $330 million of venture funding to date, Sunnyvale-based Illumio is another relatively pure-play data center microsegmentation player to watch. Illumio Core (recently rebranded from Illumio Adaptive Security Platform) is an agent-based platform with solid visualization and workflow grouping capabilities. In the past year, Illumio released Illumio Edge and announced a partnership with CrowdStrike (CRWD) that extended its focus beyond data center microsegmentation, suggesting it has broader network security aspirations as it grows.

Palo Alto Networks (PANW) – Santa Clara-based Palo Alto undertook a deliberate effort to enhance its cloud and third-party data center capabilities when Nikesh Arora joined as CEO in mid-2018. The company has since made numerous acquisitions in pursuit of this goal, but we view two deals announced in 2019 – container security specialist TwistLock and microsegmentation specialist Aporeto – as having been key to building out its now substantial cloud-based microsegmentation capabilities. Palo Alto focuses on identity-based microsegmentation, and due to its broad portfolio of security solutions, it can tightly integrate and coordinate security policy across an enterprise’s infrastructure.

ShieldX – Founded in 2015, San Jose-based ShieldX has received over $30 million in venture funding and has migrated into security microsegmentation in recent years. Unlike most solutions profiled in this report, ShieldX’s Elastic Security Platform is a network-based (agentless) solution providing application-level visibility and control. It is also built with a microservices architecture that scales efficiently. We think its approach has some technological differentiation but believe the product is less mature than some of its rivals in terms of user interface and ease of use. Combining this technology into a more established company’s portfolio through partnership or acquisition would make sense in our view.

VMware (VMW) – As a pioneer in virtualization and as a part of cloud data centers from their inception, Palo Alto-based VMware, with its NSX solution, is a well-tested and widely deployed option. A distinguishing feature is full visibility and control throughout the IT stack, including both transport (layer 4) and application (layer 7), and it also boasts integrations with many third-party systems. However, this sophistication comes with a level of complication in licensing, installation and management that we think may be avoided with some of the other options on the market.

Zscaler (ZS) – San Jose-based Zscaler was early both in understanding the security implications of migrating networks to the cloud and in applying security to cloud, multi-cloud and hybrid architectures, so we think its efforts in zero-trust microsegmentation are noteworthy. It enhanced its established Zscaler Workload Segmentation product last year with its acquisition of Edgewise Networks, which automates creating identity-based policies, and we think Zscaler will be an increasingly relevant player in the market going forward.

See heavy investment, new products and M&A ahead

Given the importance of locking down lateral data flows, the huge number of variables involved, and the complex trade-offs among solution approaches, we are not surprised to hear that many enterprises have microsegmentation on their priority list but have achieved only limited implementation to date. We believe better solutions are needed and microsegmentation will continue to see significant R&D investment and product introductions. And given microsegmentation policy enforcement is best done in coordination with other cybersecurity efforts across the enterprise, we expect some of the leading broad-based security solution players will continue to acquire and invest in this area.

Request full report

To access the full report, please provide your contact information in the form below. Thank you for your interest in First Analysis research.