Quarterly insights: Cybersecurity

Detection solutions prevent the spread of harmful deepfakes

Deepfake creation technology has evolved significantly from the rudimentary face swaps that first allowed everyday users to create low-quality deepfakes in the mid-2010s. Since then, deepfake creators, including bad actors, have developed a variety of creation methods, and the technology continues to evolve rapidly.

Governments, individuals and corporations are eager to find ways to stop malicious deepfakes, given their sometimes enormous monetary and societal costs. Deepfake detection companies address this need. They essentially reverse engineer the deepfake creation process to identify manipulated content.

The criteria for choosing among deepfake detection solutions vary based on use case. We discuss use cases in news media, law enforcement and other governmental functions, banking, and general commerce. Each differs in the level and type of deepfake detection it needs.

We highlight a sample of large technology companies that offer some deepfake detection solutions and highlight some deepfake specialists, including three for which we provide detailed profiles.

TABLE OF CONTENTS

- Growing rapidly, harmful deepfakes extract high monetary and societal costs

- Deepfake creation models continue to grow in complexity, creating more convincing fakes

- Combatting malicious deepfakes with detection software

- Use cases influence buying behavior

- Some players in the deepfake detection market

- The truth is out there

- Sector market activity: cybersecurity index performance, M&A activity highlighting Talon Cyber Security and Tessian, and private placement activity highlighting SimSpace and Phosphorus

Includes discussion of Deepware AI, DuckDuckGoose, Intel (INTC), Microsoft (MSFT), Pindrop, Reality Defender, Sentinel, Sensity and WeVerify.

Growing rapidly, harmful deepfakes extract high monetary and societal costs

Deepfakes are synthetic media generated by artificial intelligence (AI), created either entirely anew or by modifying real content, to produce compelling imitations of reality. They take form in a wide variety of media such as photos, videos, and audio recordings. Deepfakes make it difficult to distinguish fact from fiction. The incidence of deepfakes was 10 times greater in 2023 than in 2022, according to SumSub, an identity verification and fraud prevention company, clear evidence that deepfake creation technology is being used more than ever.

Although most sentiment around deepfakes is negative, the technology can be beneficial. One example is in marketing, where actors and marketers can leverage talent by licensing actors’ identities to swiftly and cost-effectively generate advertisements with deepfake technology instead of requiring actors to perform. Another example is using deepfake creation technology to personalize ad content based on individual customer preferences and demographics. Beyond marketing, deepfake technology is increasingly being used for entertainment content such as television shows, movies and podcasts. Deepfake technology is used to manipulate actors’ appearances and facial expressions to best fit production needs.

Of course, deepfake technology is often also used to cause harm, a vivid example being unauthorized use of people’s likenesses in pornography. In fact, the majority of current deepfake regulation in the United States deals with banning its use for nonconsensual pornography. For purposes of this report, however, the most relevant harmful use of deepfake technology is in creating deepfakes to influence geopolitical events and public policy and to perpetrate fraud. For example, hackers recently created and published a deepfake video of Ukrainian President Volodymyr Zelenskyy urging Ukrainians to lay down their arms in the conflict with Russia. (This deepfake was quickly identified and removed.) In early 2019, a deepfake video of Ali Bongo, president of Gabon, played a role in sparking a military coup there. Many more examples are being found and reported regularly. In the context of fraud, the Federal Trade Commission reported imposter scams resulted in $2.6 billion in losses in 2022, affecting over 36,000 victims. A somewhat well-known example is bad actors who impersonate grandchildren and urgently ask for money from a grandparent. In the corporate world, the CEO of a UK-based energy company received what he thought was a call from his parent company’s CEO requesting he have money wired to a Hungarian supplier. The CEO recognized the voice and transferred the funds, not realizing the voice was generated by AI; the money was lost.

Deepfake creation models continue to grow in complexity, creating more convincing fakes

Deepfake creation technology has evolved significantly from the rudimentary face swaps that first allowed everyday users to create low-quality deepfakes in the mid-2010s. Since then, deepfake creators have developed a variety of creation methods, and the technology continues to evolve rapidly. There are currently three prevalent machine learning models, or neural networks, that can learn from data to create deepfakes: autoencoders, generative adversarial networks and diffusion models.

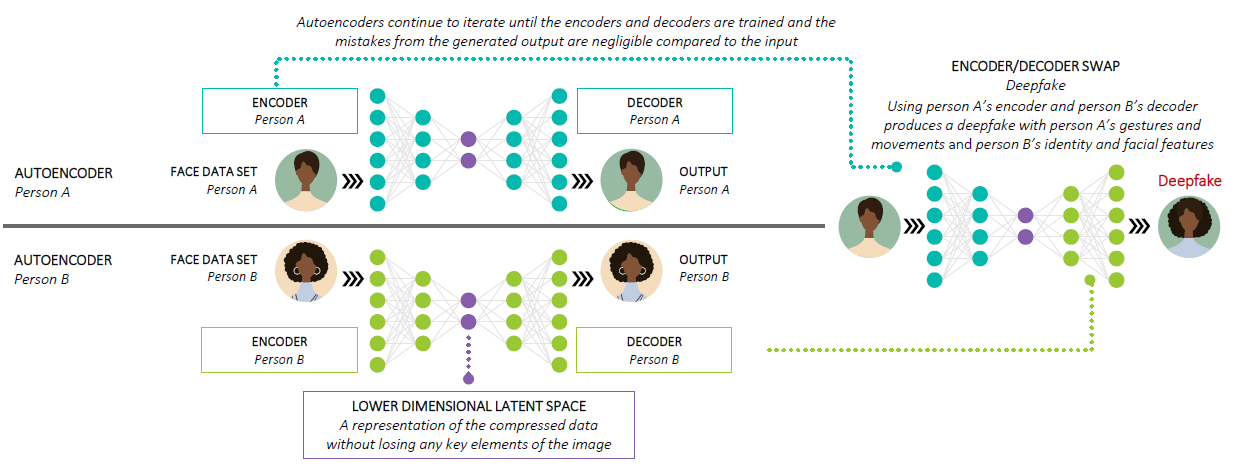

Autoencoders, also known as encoder-decoders, are supervised machine learning techniques and are simpler than the other two prevalent deepfake creation methods. This technology is used by DeepFaceLab and FaceSwap, two of the most widely used open-source software frameworks for creating deepfakes. The process involves compiling two data sets, each with many representations of a face. Each is assigned to an encoder that reduces the image to a lower-dimensional latent space, essentially a compressed representation of the data, without losing vital elements. Then, the decoder for each data set reconstructs the latent space representation into an image that closely resembles the original. This process executes iteratively, and each time the output is compared to the input until the model can accurately replicate the input with minimal mistakes. Once both the encoders and decoders are trained, the decoder can be switched to perform the face swap. For instance, a deepfake of person A’s identity and facial features with person B’s movements and gestures would use person B’s encoder to compress the image and person A’s decoder to decode the latent representation.

Autoencoder illustration

Source: First Analysis.

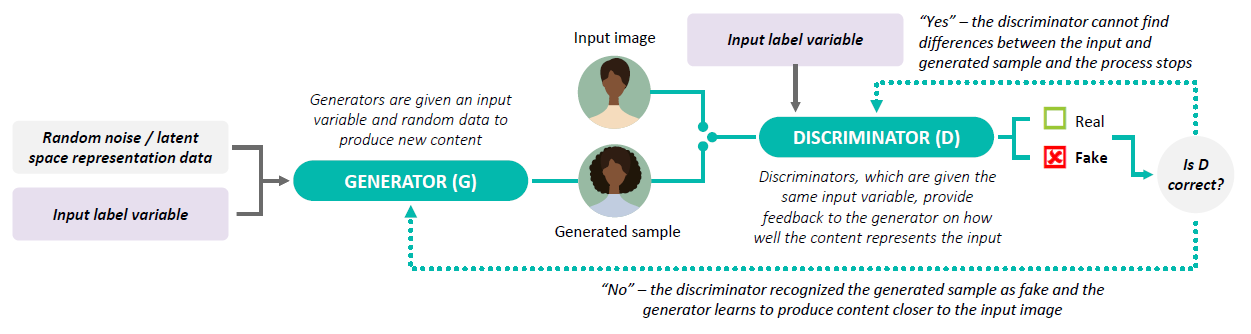

Generative adversarial networks (GANs) use a more advanced method that appeared within the last few years. They use two algorithms that work together to produce deepfakes. A generator algorithm is given a random data set, which could be a set of images or data in a low dimensional latent representation, from which it creates new content. Meanwhile, a discriminator algorithm evaluates and provides feedback to the generator on how well the new content replicates the original with a probability value as real or fake. The process executes iteratively, and each time the generator prioritizes different data points (such as colors, shapes and lines) in the latent space for a diverse output to attempt to improve the probability value. The generator continuously learns from the discriminator, reaching a point where the generator produces content that the discriminator can no longer distinguish as real or generated. Conditional GANs (CGANs) provide an additional input label, such as male, female, cat or dog, to both the generator and the discriminator for tailored content deepfakes. GANs require a substantial amount of data to effectively capture content features for deepfake creation.

While GANs and autoencoders can produce compelling deepfakes, they have shortcomings. Autoencoders are relatively slow to train, and GAN models may fail to converge or may face a vanishing gradient problem. A vanishing gradient occurs when the gradients from the discriminator algorithm, which quantify the errors in a network, are too small between iterations, leaving the generator with insufficient feedback to adjust the generated image and continue learning. At this point, the generator produces the same incorrect image repeatedly, but the discriminator’s feedback fails to provide the necessary adjustments. Additionally, these generative models struggle to create content on high-resolution data sets.

Conditional generative adversarial network illustration

Source: First Analysis.

Diffusion models have seen increasing use for generating deepfakes due to their enhanced stability during training and their success in producing higher-quality fake images and audio. The process begins with an input image, where each pixel has an initial intensity value. The diffusion model then systematically introduces a controlled amount of noise—typically based on a probability distribution—to each pixel. This process continues until the image transforms into a visual pattern of entirely random and unstructured pixels, known as pure noise. Subsequently, a neural network is trained to reverse this process, synthesizing data from the pure noise image into a clean sample. Like GANs, diffusion models refine their parameters based on the disparities between images generated from noise and original input, eliminating imperfections and enhancing deepfakes’ quality. One key benefit of diffusion models is their ability to use low-resolution images or random noise to generate a corresponding high-resolution image.

Combatting malicious deepfakes with detection software

Given malicious deepfakes’ sometimes enormous monetary and societal costs, governments, individuals and corporations are eager to find ways to stop them. Examples include journalists and media companies that need to avoid using or being misled by deepfakes, particularly given mass media’s potential to amplify deepfakes’ harmful intent. As a result, deepfake detection companies have emerged to help analyze and detect deepfakes across content types, including video, audio, still images, and text. Most deepfake detection companies implement their solutions through web-based applications, application programming interfaces (APIs) that integrate with their customers’ identity verification systems, or browser extensions. Deepfake detection providers include pure-play providers, full-suite cybersecurity companies, and large diversified technology companies. They typically price based on the number of images, videos, audio recordings or written texts analyzed or based on number of users.

Deepfake detection companies essentially reverse engineer the deepfake creation process to identify manipulated content. After training their proprietary models, detection companies compare the content they evaluate with their model to spot irregularities and intricate patterns and determine if content is likely to be a deepfake. Any given model used for detection is only as good at detecting deepfakes as it is at generating that kind of deepfake, meaning a model may struggle to detect deepfake creation methods it has not encountered before. Companies may reinvigorate older detection models with more diverse and higher-quality training data sets to help better detect novel deepfake methods. Some companies use multiple models to provide more comprehensive detection.

In addition to using models to analyze content, detection companies look for the many tell-tale signs of deepfake content:

- In a video of a person talking, the shape of the person’s mouth may fail to match the sound associated with it, referred to as a phoneme-viseme mismatch.

- A person’s image may show unnatural facial expressions or unnatural eye, face or body movements.

- A person’s image may be blurry or discolored, include fake-looking hair or teeth, or display a lack of emotion.

- A person’s image may display biological abnormalities, like skin tone changes or abnormal breathing.

- A still or video image background may contain irregularities, such as abnormal shadows.

- Audio content may include odd tones, frequencies, intensities, kurtosis (ambient and transient noise), or skewness (often evidenced by crackling or popping sounds).

The deepfake detection companies we focus on in this report analyze content their customers specifically identify as suspect rather than ingesting entire bodies of content. This approach predominates today, but we envision a time in the near future when customers will want to scour broad swaths of media for deepfakes mimicking their brands, images or other intellectual property, particularly as deepfakes start to penetrate advertising and other protected content. In this context, digital brand protection companies will seek to add deepfake detection capabilities. For example, First Analysis venture capital portfolio company Tracer, which already scours diverse media for unauthorized and sometimes fraudulent use of its clients’ intellectual property, is developing and plans to add proprietary deepfake detection technology to its arsenal.

Use cases influence buying behavior

The criteria for choosing among deepfake detection solutions vary based on use case. For instance, journalists generally only care whether a digital media file has a reasonable likelihood of being fake so they can decide whether to rely on it or seek alternative sources; as such, their needs rarely go beyond a confidence level score on whether the media is real. Law enforcement and government professionals often need deeper analysis. They may need to prove in court which portions of content have been manipulated and how they reached this conclusion. Government professionals, to maintain the public’s confidence, may need to provide evidence to the public that substantiates content is, indeed, fake.

Use cases related to financial fraud are quite different. In banking, many institutions have invested significant sums to build voice or facial recognition into their routine authorization or “know-your-customer” workflows and have little ability to change their processes in the immediate term. With the advent of deepfake technology, they have a compelling need for deepfake detection technology that excels at analyzing such well-defined, narrow content types with high precision and speed. We think this could be one of the largest near-term vertical markets.

Stopping fraud that involves using deepfake technology to impersonate trusted parties in conversation-based transactions (such as a CEO requesting a wire transfer or a grandchild asking a grandparent for money) demands different detection technology. This has proven to be a tougher problem to solve and, to date, one that is difficult to monetize, but we believe it represents a substantial opportunity for companies that develop effective solutions.

We believe there is some risk the fraud-related deepfake detection market may diminish in the long term, particularly for banking and other transaction authorization purposes. Based on our research, deepfake detection technology has lagged behind deepfake creation technology despite significant advances in detection methods and AI models. If deepfake detection technology cannot sustainably outpace creation technology, institutions will likely shift away from authorization processes and technologies that rely on media subject to being deepfaked, obviating their need for deepfake detection. Future legal and regulatory developments are also likely to influence how this market evolves. But re-engineering existing transaction workflows would take significant time and investment, so we foresee solid near-term demand for fraud-related deepfake detection technology. Such demand should increase the value of quality pure-play deepfake detection companies and attract strategic interest from large, broader technology and cybersecurity companies looking to enhance their product suites with differentiated technology.

Some players in the deepfake detection market

The deepfake detection market features numerous companies. Below, we briefly highlight some large technology companies that offer deepfake detection solutions as well as some deepfake specialists, and we provide detailed profiles of three other pure-play specialists.

- Sentinel provides image, video and audio deepfake detection software through its website and API. Users can upload media to be analyzed for evidence of synthetic content and receive reports detailing findings and visualizations showing where media has been altered.

- Intel (INTC) offers FakeCatcher as part of its suite to detect fake videos. It assesses human characteristics such as blood flow signals on the face, eye-gaze, and more to detect deepfakes and it can analyze up to 72 streams concurrently.

- Microsoft (MSFT) offers a video authenticator tool that analyzes still photos and videos to provide real-time confidence scores indicating whether media has been manipulated. It also offers a tool built into Azure that enables content producers to digitally watermark content.

- WeVerify uses a web browser plug-in to provide human-in-the-loop content verification and disinformation analysis to expose fabricated content in text, still images and videos.

- Deepware AI’s technology analyzes videos and still images for evidence of manipulated content.

- Sensity offers technology for verifying identity documents, face-matching, liveness checking, detecting fraudulent documents, and detecting deepfakes in still images, video and audio. It delivers its solutions via a web-based application, APIs, and on-premise software.

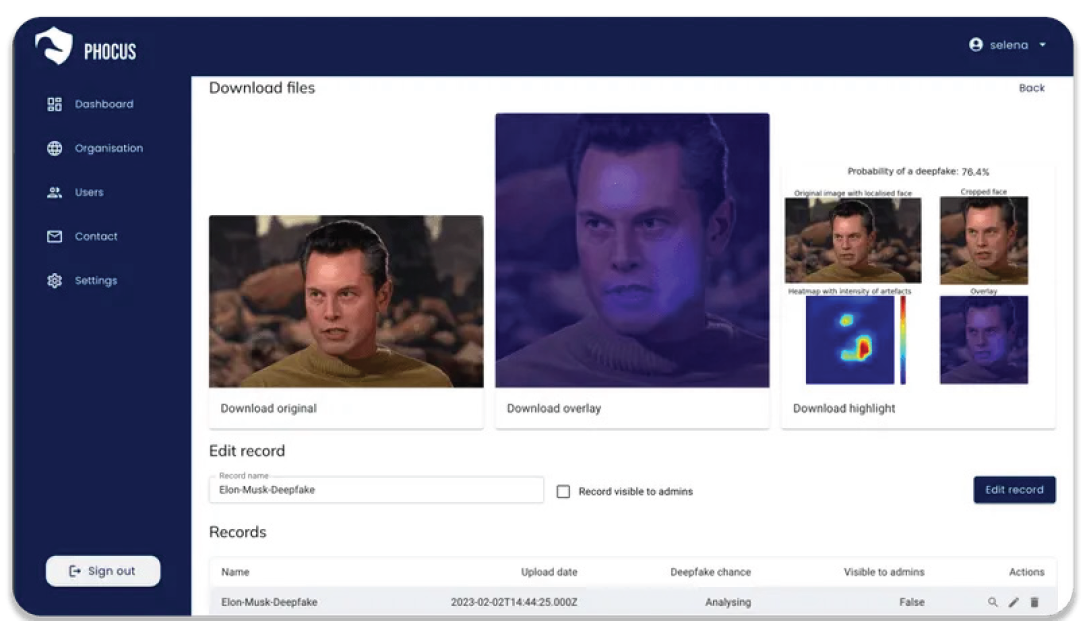

Founded in 2020 and based in Delft, Netherlands, DuckDuckGoose is an early stage deepfake detection company that uses extendable neural networks to detect a variety of deepfakes via several solutions. DeepDetector, DuckDuckGoose’s flagship product, detects deepfake images and videos in real time by integrating into customers’ existing identity verification systems. Its AI voice detector helps identify synthetic audio and content generated from text-to-speech software, providing real-time voice verification to ensure call security. Its AI text detection module helps check for content originating from AI text generators. For those with simpler deepfake use cases, like journalists, its DeepfakeProof is a free browser plugin that automatically scans visited webpages to detect manipulated media. Lastly, its Deepfake Maker creates high-quality deepfakes customers can use to test their own systems (it also helps the company improve the broader detection platform).

DuckDuckGoose’s detection platform provides the potential probability of a deepfake, accompanied by activation maps that highlight manipulated elements

Source: DuckDuckGoose.

Currently, the company’s target customers are banks and governments with high identity security needs, but it also supports journalists, law enforcement personnel, and others seeking to detect deepfakes. Law enforcement personnel, in particular, require forensic evidence when alleging use of deepfakes in investigations and legal disputes. While all Deepfake detection companies identify content that is likely to be deepfake, DuckDuckGoose’s differentiated activation maps help identify and highlight the most suspicious areas of an image or video with an explanation of what type of deepfake method was likely used. With this information, investigators can more accurately assess content authenticity and substantiate their conclusions. DuckDuckGoose prices its solution based on expected volume of content analyzed, type of content (image or video), and output type (explainable AI or deepfake probability classification).

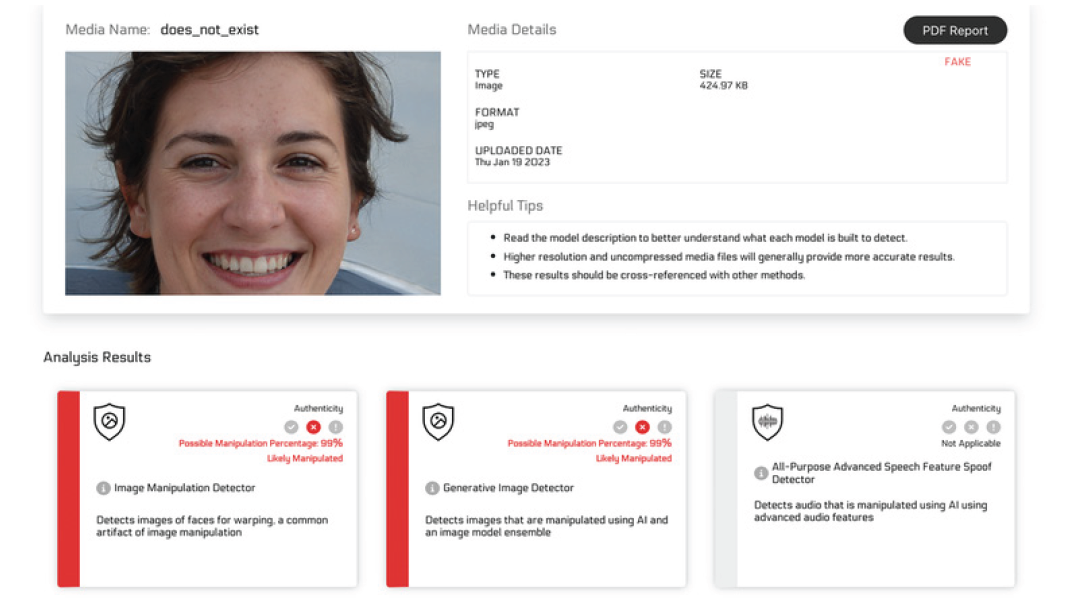

Founded in 2018, New York-based Reality Defender has raised over $22 million from Data Collective Ventures, Comcast Ventures, ex/ante, Partnership Fund for New York City, Rackhouse Ventures, Parameter Ventures, and Nat Friedman’s AI Grant. Its deepfake detection platform, available via API, webhooks, and web applications, provides anti-fraud and know-your-customer solutions as well as solutions aimed at preventing the spread of misinformation. Reality Defender’s products support governments, banks, social media platforms, journalists and academics who have critical identity, authentication, and fraud-prevention needs. The company charges an annual license fee based on anticipated number of reads through the platform. If a customer’s actual amount exceeds the allocated limit, the allocated amount and pricing structure are adjusted for the following year.

Reality Defender’s ensemble model approach provides comprehensive coverage, capable of uncovering various deepfake creation methods

Source: Reality Defender.

Reality Defender uses a differentiated ensemble model where multiple models are individually trained to look for specific instances of manipulation. This is particularly advantageous when complex deepfakes may slip past certain detection algorithms. This comprehensive test helps identify image manipulation, face swaps, diffusion-generated images, GAN-generated images, video deepfakes, face blending, and speech synthesis. Each model works on its own to return a probabilistic score for deepfake content. Then, customers can accurately determine content’s authenticity and, if it is not authentic, which deepfake creation model generated the content. To further help deepfake investigations related to AI-generated written text, the platform analyzes content and uses color to highlight text likely to have been generated by AI, saving significant time and energy for those validating content. The company also provides real-time alerts and downloaded reports to users via email or through the platform. Reality Defender plans to add explainable AI for audio, video and image deepfake detection in the coming months. This offering will highlight the deepfaked portions of videos, images and audio.

Likely the largest pure-play deepfake detection company, Atlanta-based Pindrop was founded in 2011 and has raised over $212 million from Vitruvian Partners, Allegion Venture, Cross Creek, Dimension Data, EDBI, Goldman Sachs, CapitalG, IVP, Andreessen Horowitz, GV and Citi Ventures. Pindrop’s audio authentication platform analyzes voice biometrics, identifies acoustic anomalies beyond voice, and examines audio metadata and background noise. Pindrop also provides a phone-printing engine that analyzes over 1,300 audio features to create distinct profiles, recognize customers, identify fraud, and recognize behavioral characteristics that may indicate manipulated content. The company’s newer Trace offering enables enterprises to view relationships between calls and customer accounts over time to better understand potential vulnerabilities and possible remediation. Pindrop’s target customers are financial institutions, insurance companies, call centers and companies offering connected devices such as TVs, cars and smart-home devices.

The truth is out there

In a world where artificial intelligence makes the reality of our daily interactions increasingly difficult to assess, deepfake detection technology is critical to individual and societal well-being. Fortunately, we see numerous companies stepping up with solutions. Given how pervasive AI portends to be (for bad as well as good), we think they’ll likely be addressing a large and compelling market opportunity for some time to come.

Request full report

To access the full report, please provide your contact information in the form below. Thank you for your interest in First Analysis research.